Imitation Learning with low-cost robotic arm

Imagine a robotic arm that can learn to perform tasks just by watching human demonstrations!

Overview

This project demonstrates how low-cost robotic arms (Koch) can learn from human demonstrations using imitation learning by Action Chunking Transformer (ACT).

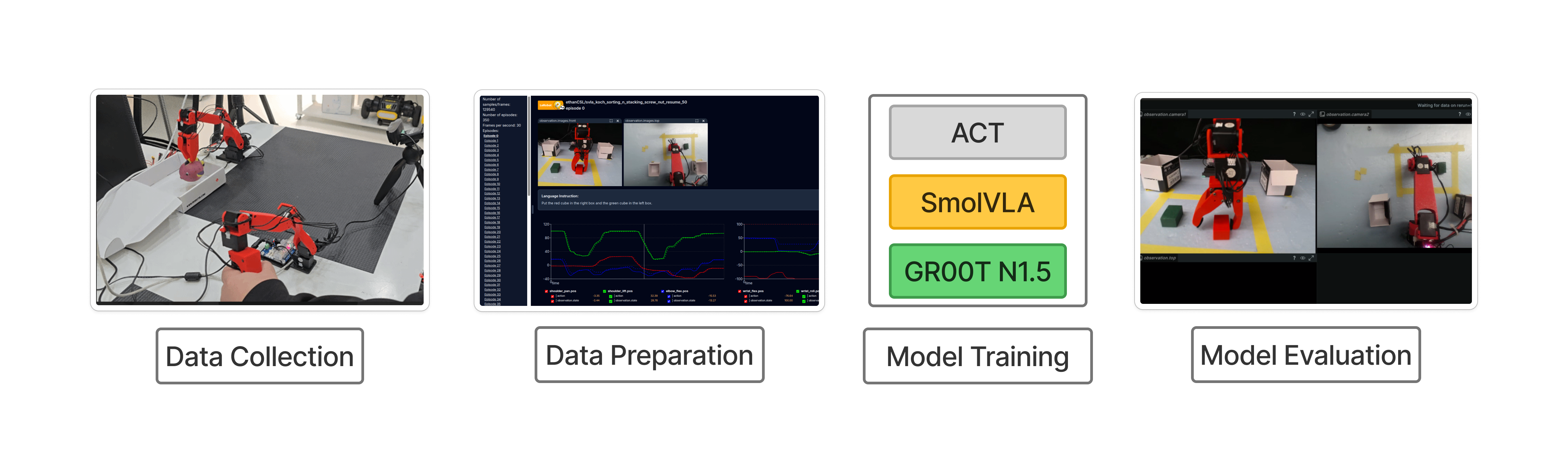

LeRobot Pipeline: Training imitation learning models by Koch robot

1. Data Collection

The first step is collecting high-quality demonstration data from human teleoperation.

Dataset Format

We use the LeRobot dataset format, which stores episodes as Parquet files containing observation(following robot joint states) , and action(leading arm's joint states) and mp4 for top and front camera observation.

# Robot Joint States (6-DOF)

- "shoulder_pan.pos"

- "shoulder_lift.pos"

- "elbow_flex.pos"

- "wrist_flex.pos"

- "wrist_roll.pos"

- "gripper.pos"

# Camera Observations

- "observation.images.front"

- "observation.images.top"

LeRobot dataset structure with joint positions and camera observations

Control Method

Human demonstrations are collected via teleoperation using a leader-follower setup, where the operator controls a leader arm and the follower arm mimics the movements.

Leader-follower teleoperation for data collection

2. Model Training

After collecting demonstration data, we train imitation learning models to predict robot actions from visual observations.

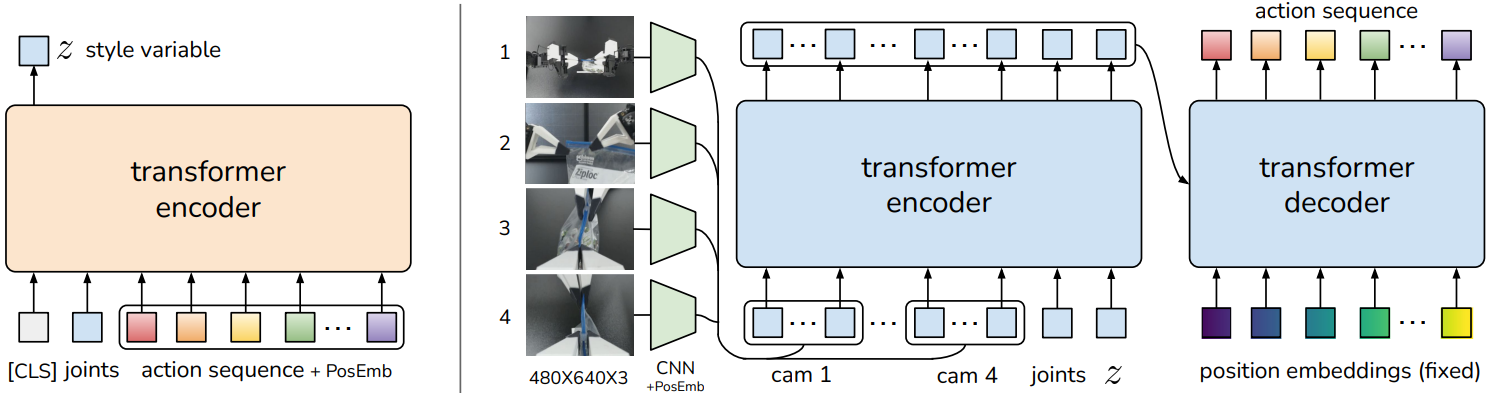

ACT (Action Chunking Transformer)

A pure imitation learning approach that predicts action sequences("chunks") rather than single actions . It uses a transformer encoder-decoder architecture with a CVAE (Conditional Variational Autoencoder) for modeling action distributions.

ACT Architecture (Source: ACT Paper)

✅ Strengths

- • Fast training (no VLM backbone)

- • Lightweight (~25M parameters)

- • Good for single-task learning

⚠️ Limitations

- • No language understanding

- • Requires task-specific training

- • Limited generalization

3. Deployment

The trained model is deployed on the robot for real-time inference and autonomous task execution.

Real-time Inference

The model runs at ~30Hz, predicting action chunks that are executed by the robot controller in real-time.

Autonomous task execution after 50 episodes training

Real-time action classification using the trained ACT model